DeepSeek-V4 Preview: 1M Context, Top-Tier Agent Coding, and Two Tiers for Every Workflow

DeepSeek-V4 Preview launches on April 24, 2026 with two tiers: V4-Pro is the best open-source agent coding model and approaches Claude Opus 4.6 (non-thinking) on general benchmarks; V4-Flash is the fast, economical tier that matches V4-Pro on simple reasoning tasks. Both ship with a 1M context window as standard, powered by a new attention architecture (token-level compression + DSA) that cuts long-context compute by up to 6× compared to V3.2. The release also adds agent framework optimization for Claude Code, OpenClaw, OpenCode, and CodeBuddy, and publishes open weights on Hugging Face and ModelScope.

- V4-Pro is the best open-source agent coding model, close to Claude Opus 4.6 (non-thinking).

- V4-Flash matches V4-Pro on simple tasks at lower latency and cost.

- 1M context is standard for all DeepSeek services — no extra tier or pricing.

What launched: DeepSeek-V4 Preview

DeepSeek-V4 Preview is the first public release of the V4 generation. It ships two models — V4-Pro and V4-Flash — that share a new attention architecture but target different latency and cost profiles.

The headline change is practical, not just architectural: every DeepSeek official service now provides a 1M token context window by default. There is no separate pricing tier, no special flag, and no hidden context surcharge for long documents.

DeepSeek-V4-Pro: capabilities and benchmarks

V4-Pro targets the hardest tasks: agent coding, complex reasoning, and knowledge-heavy queries. The launch benchmarks position it as the strongest open-source model in agent coding and competitive with top-tier closed-source models across multiple categories.

- Agent coding: best among open-source models, competitive with Claude Opus 4.6 (non-thinking) on general benchmarks.

- World knowledge: second only to Gemini-Pro-3.1; ahead of GPT-5.4 and Claude Opus 4.6.

- Reasoning: top-tier in math, STEM, and competitive programming benchmarks.

- 1M context window with up to 6× less compute and memory than V3.2 at long context.

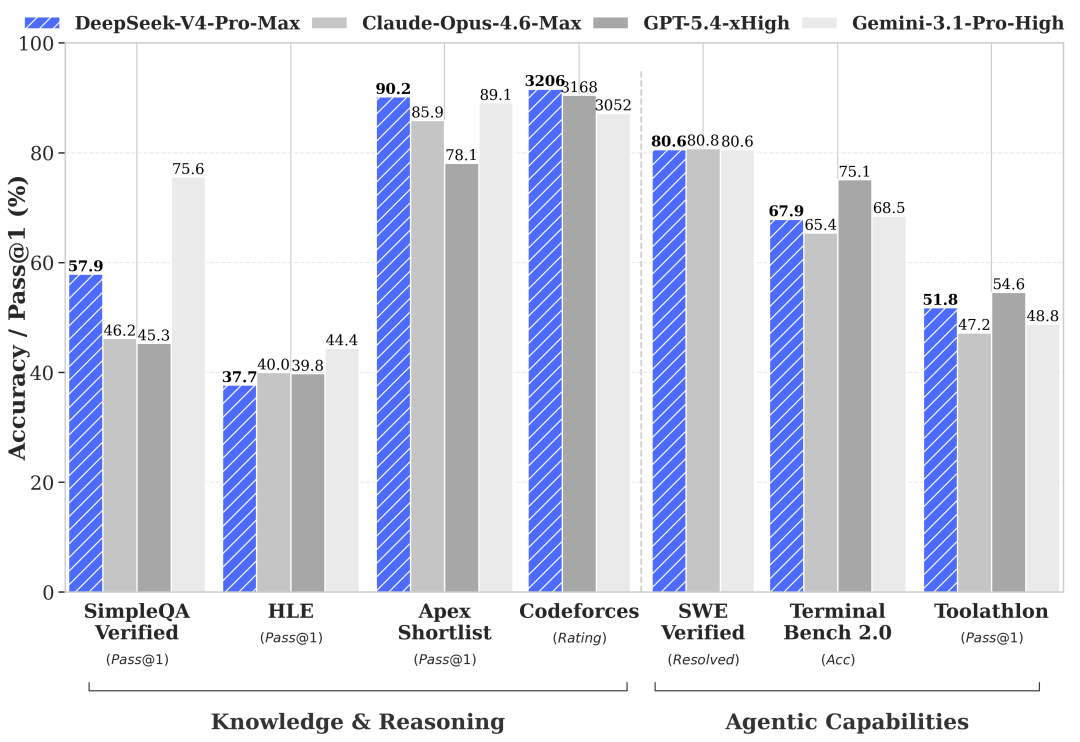

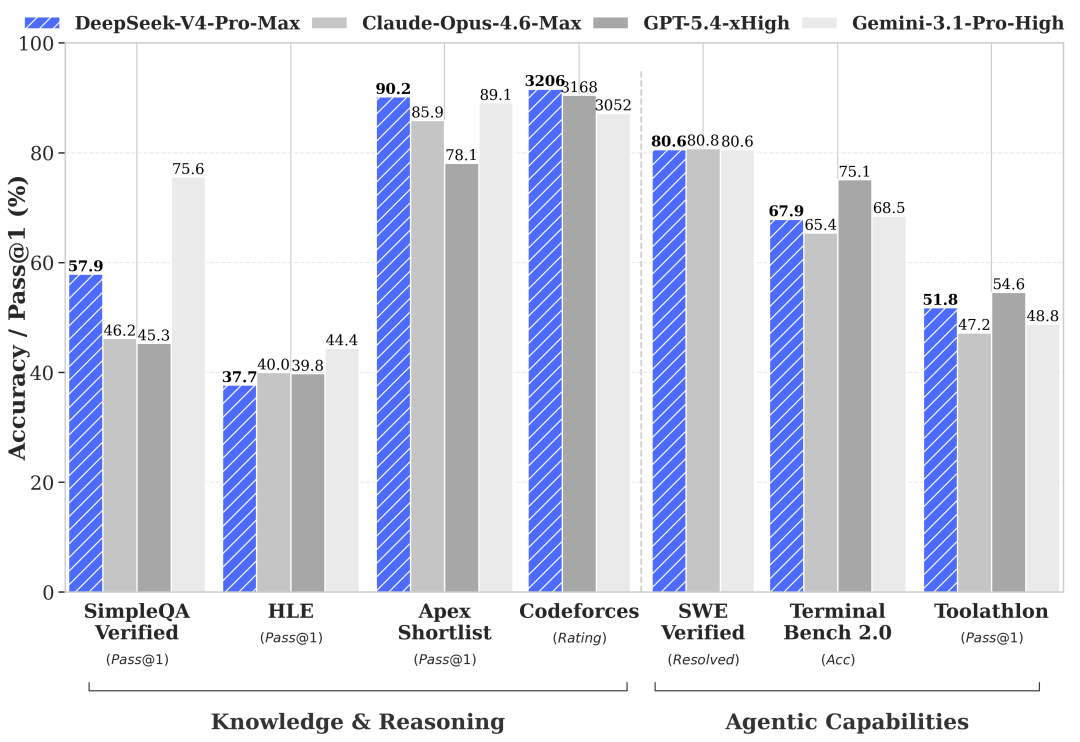

Official image

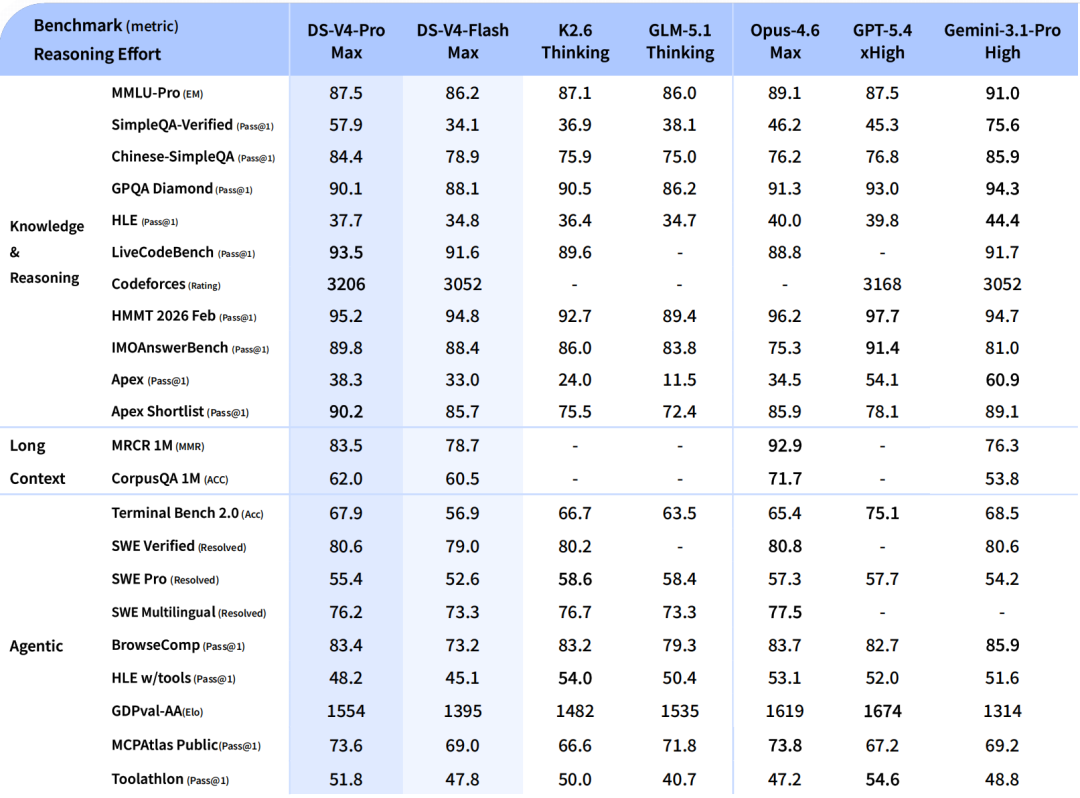

DeepSeek publishes a full benchmark poster comparing V4-Pro to top-tier closed-source models

The launch article embeds a detailed benchmark chart that makes V4-Pro competitive positioning clear: top open-source in agent coding, second worldwide in world knowledge, top-tier in reasoning.

- Agent coding: best among open-source models, competitive with Claude Opus 4.6 non-thinking.

- World knowledge: only behind Gemini-Pro-3.1; ahead of GPT-5.4 and Claude Opus 4.6.

Source: DeepSeek WeChat announcement.

DeepSeek-V4-Flash: fast and economical

V4-Flash is the lighter tier. It is designed for high-throughput workloads where latency matters more than peak reasoning depth. On simple reasoning tasks, V4-Flash matches V4-Pro; on harder benchmarks, the gap widens as expected for a model that trades some world-knowledge capacity for speed.

For most API users who previously used deepseek-chat or deepseek-reasoner, V4-Flash is the natural upgrade path. It replaces both legacy model names under a single endpoint with thinking and non-thinking modes.

- Matches V4-Pro on simple reasoning tasks with lower latency.

- Less world-knowledge capacity than V4-Pro, but still strong for general-purpose use.

- Replaces deepseek-chat (non-thinking) and deepseek-reasoner (thinking) in the API.

- Legacy model names redirect to V4-Flash and will be deprecated by July 24, 2026.

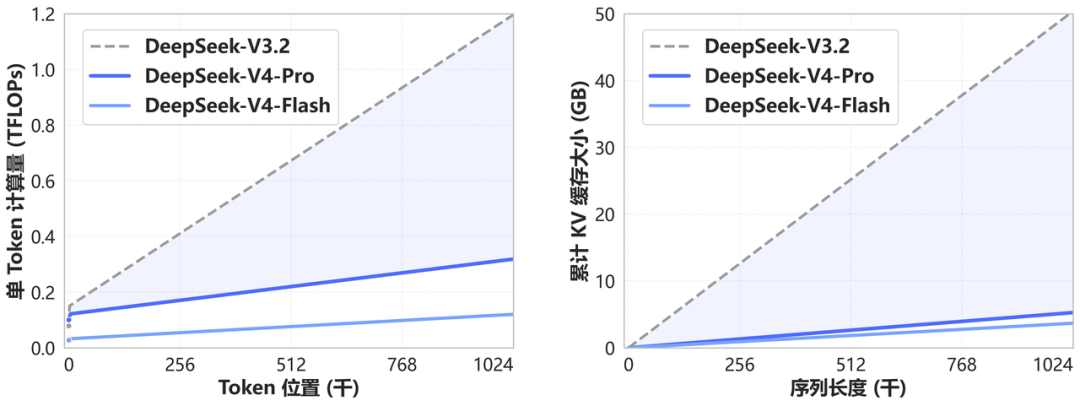

Architecture: token-level compression and DSA

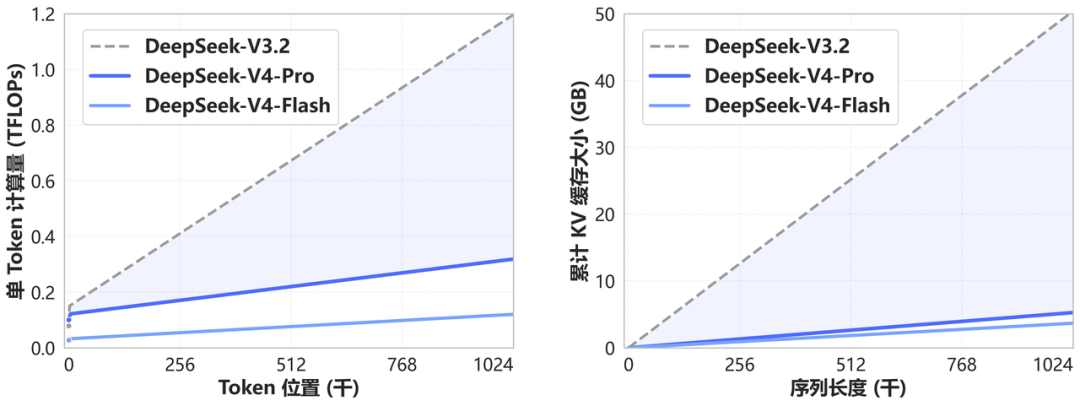

V4 introduces a new attention mechanism built on two components: token-level compression and DeepSeek Sparse Attention (DSA). The official chart shows that at 1M tokens, V4 uses roughly 6× less computation and memory than V3.2 would require for the same workload.

This efficiency gain is what makes the "1M context for every tier" positioning practical. Without the architecture change, serving 1M context at V4-Flash pricing would not be economically viable.

Official image

V4 uses token-level compression and DSA to cut long-context compute by up to 6×

The official efficiency chart compares V4 against V3.2 across multiple context lengths. At 1M tokens, V4 uses roughly 6× less computation and memory than V3.2 would require for the same workload.

- New attention mechanism: token-level compression + DeepSeek Sparse Attention (DSA).

- 1M context is now standard across all DeepSeek official services — no special tier or extra cost.

Source: DeepSeek WeChat announcement.

Agent framework optimization

V4-Pro is specifically optimized for agent workflows — long-horizon tasks where the model plans, uses tools, and iterates over many steps. DeepSeek lists explicit support for four agent frameworks: Claude Code, OpenClaw, OpenCode, and CodeBuddy.

The launch article shows a concrete example: V4-Pro generating a complete PPT file inside an agent framework. This combines planning (deciding slide structure), content generation (writing text), visual layout (arranging elements), and file assembly (producing the output) in one chain — a task that goes well beyond code generation.

Official image

V4-Pro can generate complete PPT files inside an agent framework

The launch article shows a concrete agent workflow where V4-Pro generates a full presentation file — a task that combines planning, visual layout, content writing, and file assembly in one chain.

- Agent frameworks supported: Claude Code, OpenClaw, OpenCode, CodeBuddy.

- Demonstrates end-to-end creative output, not just code generation.

Source: DeepSeek WeChat announcement.

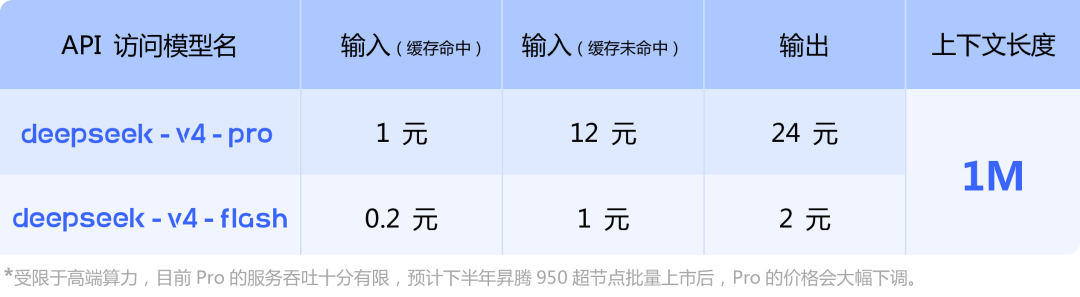

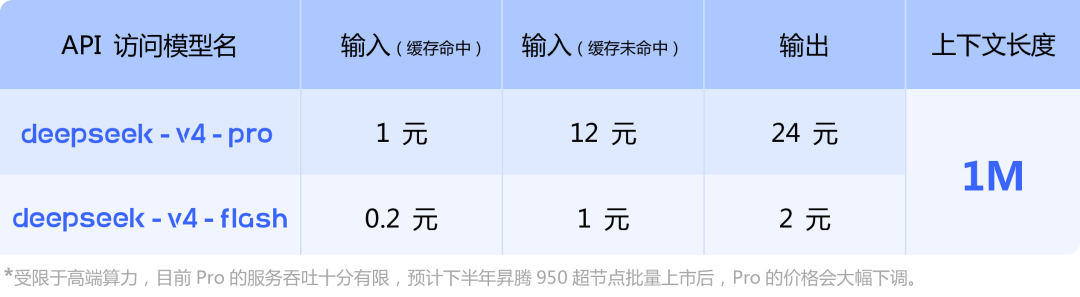

API access and model names

The API uses two simple model names. Both support thinking and non-thinking modes, controlled by the reasoning_effort parameter (values: high or max). The context window is 1M tokens for both tiers.

Official image

API access uses simple model names with thinking and non-thinking mode support

The official API docs show two model names — deepseek-v4-pro and deepseek-v4-flash — both supporting reasoning_effort (high/max). Legacy names (deepseek-chat, deepseek-reasoner) redirect to V4-Flash and will be deprecated by July 2026.

- deepseek-v4-pro: highest quality, supports thinking mode.

- deepseek-v4-flash: fast and economical, replaces deepseek-chat and deepseek-reasoner.

Source: DeepSeek WeChat announcement.

| Parameter | V4-Pro | V4-Flash |

|---|---|---|

| Model name | deepseek-v4-pro | deepseek-v4-flash |

| Context window | 1M tokens | 1M tokens |

| Thinking mode | Yes (reasoning_effort) | Yes (reasoning_effort) |

| Non-thinking mode | Yes | Yes |

| Best for | Hard reasoning, agent coding | Fast inference, general use |

Legacy model name migration

DeepSeek is simplifying its API surface. The old model names deepseek-chat and deepseek-reasoner now redirect to deepseek-v4-flash in non-thinking and thinking modes respectively. These legacy names will be fully deprecated on July 24, 2026 — three months after launch.

If you have existing integrations using deepseek-chat or deepseek-reasoner, they will continue to work during the transition period but should be updated to the new names before the deadline.

Open source and local deployment

V4 weights are published on Hugging Face and ModelScope. A technical report PDF is also available for researchers who want to understand the architecture details beyond what the launch article covers.

Self-hosting follows the same pattern as previous DeepSeek releases: download the weights, set up a compatible inference server (vLLM, SGLang, or similar), and point your agent framework at the local endpoint. The 1M context window means you need enough GPU memory to hold the KV cache for long sequences — the DSA compression helps here, but the hardware requirement is still substantial.

DeepSeek-V4 makes 1M context the default, not the premium

The V4 generation removes the biggest practical barrier to long-context inference — cost and compute — by redesigning the attention layer itself. V4-Pro competes with top-tier closed-source models on agent coding, and V4-Flash delivers the same context window at a fraction of the price. For anyone evaluating Chinese AI models for production coding workflows, V4 is now the baseline to compare against.

Sources and official links

Frequently asked questions

What is the difference between DeepSeek-V4-Pro and V4-Flash?

V4-Pro is the higher-quality tier optimized for hard reasoning, agent coding, and knowledge-heavy tasks. V4-Flash is faster and more economical, matching V4-Pro on simple tasks but falling behind on complex reasoning and world knowledge. Both share the same 1M context window and new attention architecture.

Does DeepSeek-V4 support 1M context for all users?

Yes. The official announcement states that 1M context is now standard for all DeepSeek official services. There is no separate pricing tier or special access required.

What happens to the old deepseek-chat and deepseek-reasoner model names?

They redirect to deepseek-v4-flash. deepseek-chat maps to V4-Flash in non-thinking mode; deepseek-reasoner maps to V4-Flash in thinking mode. The legacy names will be deprecated on July 24, 2026.

Is DeepSeek-V4 open source?

Yes. V4 weights are available on Hugging Face and ModelScope. A technical report PDF is also published for architecture details.

Which agent frameworks support DeepSeek-V4?

The launch article lists four frameworks with explicit optimization: Claude Code, OpenClaw, OpenCode, and CodeBuddy. General API compatibility means other frameworks can also connect using the standard OpenAI-compatible endpoint.

What is DSA in DeepSeek-V4?

DSA stands for DeepSeek Sparse Attention. Combined with token-level compression, it reduces computation and memory usage at long context lengths by up to 6× compared to V3.2. This is what makes 1M context practical at V4-Flash pricing.