MiMo-V2.5 & V2.5-Pro Model Guide: Native Multimodal Agent, 42% Token Savings, Token Plan Overhaul

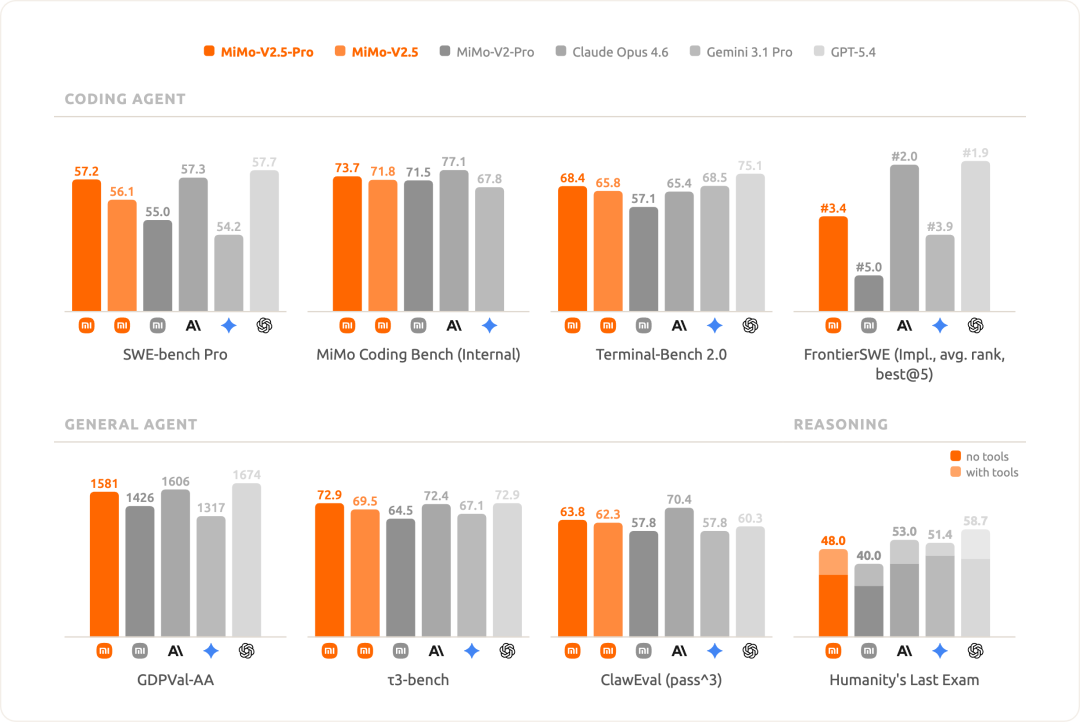

Xiaomi launched the MiMo-V2.5 series on April 22, 2026 with two headline models: MiMo-V2.5-Pro, the strongest model Xiaomi has shipped, competing with Claude Opus 4.6 and GPT-5.4 on complex agent tasks; and MiMo-V2.5, a native multimodal agent that sees, hears, reads, and acts — all at roughly 50% lower API cost than the previous V2-Pro generation. The Token Plan also received a major overhaul: no more 256K/1M credit differentiation, 80% night-rate discount, auto-renewal savings, and annual plan at 88% off. Both models are slated for open-source release.

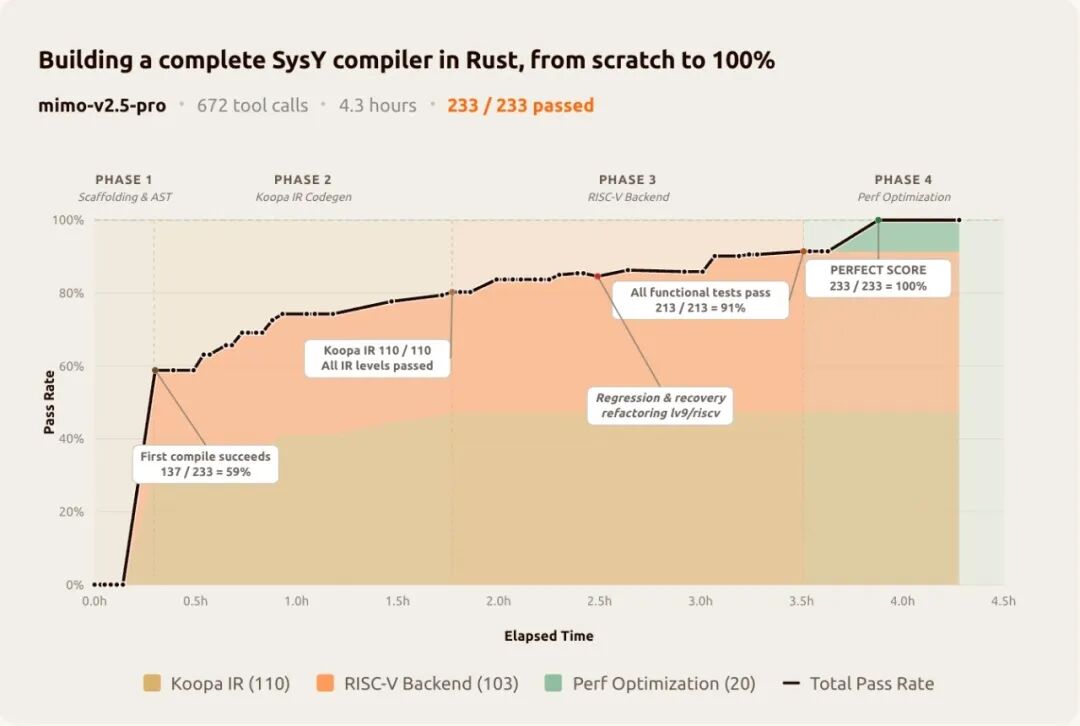

- V2.5-Pro completed a full Rust SysY compiler (PKU course project) in 4.3 hours, 672 tool calls, scoring 233/233 — a task that normally takes undergraduate students weeks.

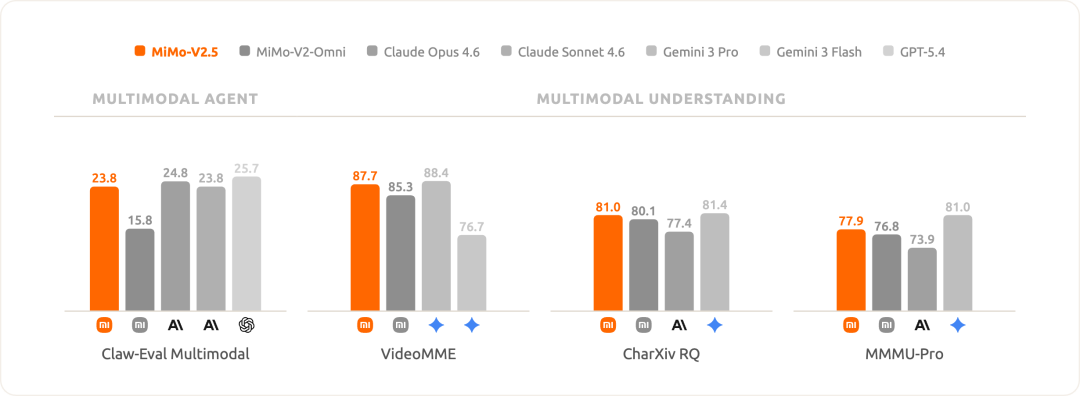

- V2.5 is a native multimodal agent (image, audio, video) with 1M context, surpassing V2-Omni in video understanding and chart analysis benchmarks.

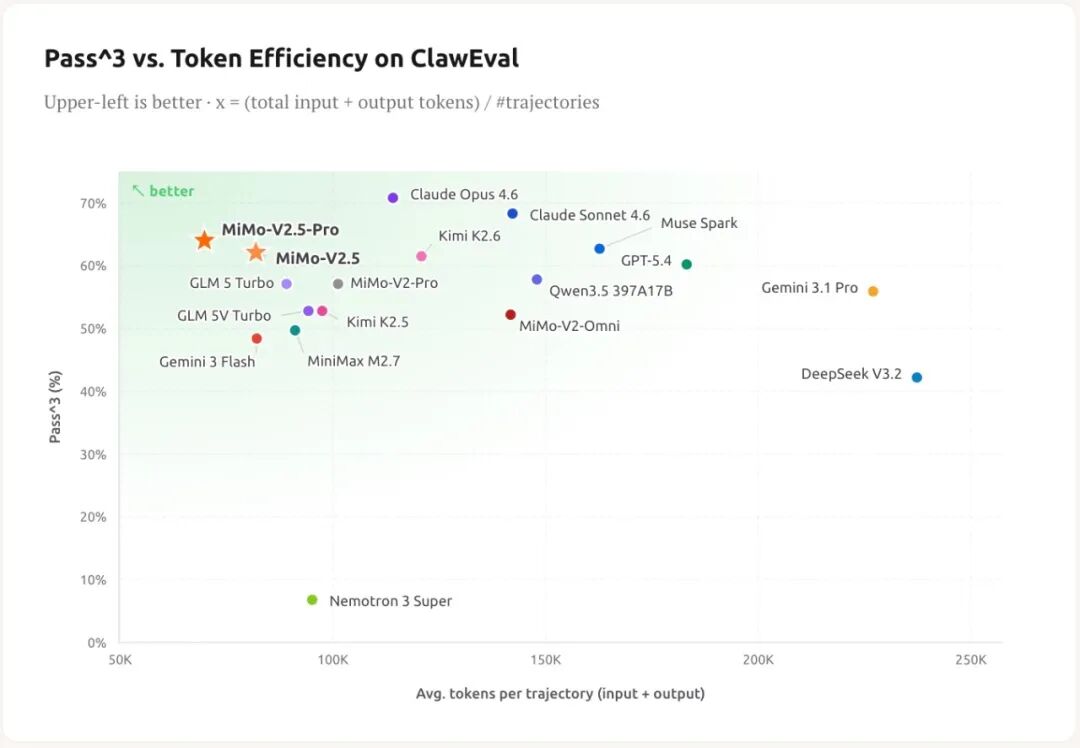

- V2.5-Pro saves 42% tokens vs Kimi K2.6, V2.5 saves 50% vs Muse Spark at the same ClawEval scores.

- Token Plan overhaul: night discount (80% rate 00:00–08:00 Beijing), auto-renewal (70%/77% off), annual plan (88% off), credits reset for all users.

MiMo-V2.5 series: what launched

The MiMo-V2.5 family includes four models: MiMo-V2.5, MiMo-V2.5-Pro, MiMo-V2.5-TTS Series, and MiMo-V2.5-ASR. The two headline models — V2.5 and V2.5-Pro — represent a full-stack upgrade from the previous generation: stronger reasoning, more stable agent execution, longer sustained attention, better instruction following (including fuzzy instructions), and improved multimodal perception.

Xiaomi describes this as a jump from "usable" to "genuinely good" — the models can now handle serious professional work with higher confidence. Both V2.5-Pro and V2.5 are scheduled for global open-source release.

Official announcement

MiMo-V2.5 series enters public beta with V2.5, V2.5-Pro, V2.5-TTS, and V2.5-ASR

Xiaomi announced the full MiMo-V2.5 family with stronger reasoning, more stable agent execution, longer context, better instruction following, and full multimodal perception.

- V2.5-Pro targets long-horizon, high-difficulty agent tasks — the strongest model Xiaomi has shipped.

- V2.5 is a native multimodal agent model that covers most general agent scenarios at ~50% lower cost than V2-Pro.

Source: Xiaomi MiMo WeChat announcement.

| Model | Role | Key upgrade |

|---|---|---|

| MiMo-V2.5-Pro | Flagship agent model | Long-horizon agent tasks, competes with Claude Opus 4.6 / GPT-5.4 |

| MiMo-V2.5 | General multimodal agent | Native image/audio/video, 1M context, ~50% lower API cost than V2-Pro |

| MiMo-V2.5-TTS Series | Text-to-speech | Voice synthesis for agent voice output |

| MiMo-V2.5-ASR | Automatic speech recognition | Audio input understanding for agent workflows |

MiMo-V2.5-Pro: the strongest model Xiaomi has shipped

MiMo-V2.5-Pro is designed for the hardest agent tasks: those requiring sustained reasoning over hundreds or thousands of tool calls. In internal tests, it completed single sessions involving nearly a thousand tool calls while maintaining logical consistency and following implicit instructions embedded deep in the context.

Xiaomi positions V2.5-Pro as directly competitive with Claude Opus 4.6 and GPT-5.4 in general agent capability, complex software engineering, and long-horizon task execution. The model can now be trusted with genuinely serious professional work.

Benchmark result

MiMo-V2.5-Pro scores 233/233 on a PKU compiler course project in 4.3 hours

The Rust SysY compiler task (lexer, parser, AST, Koopa IR, RISC-V backend, optimizations) normally takes Peking University undergrads weeks. V2.5-Pro finished in 4.3 hours with 672 tool calls.

- First compilation pass rate: 137/233 (59%) — architecture was correct before any test ran.

- Koopa IR: 110/110, RISC-V backend: 103/103, optimizations: 20/20 — each subsystem scored perfectly.

Source: Xiaomi MiMo WeChat announcement.

| Task | Duration | Tool calls | Result |

|---|---|---|---|

| Rust SysY compiler (PKU course) | 4.3 hours | 672 | 233/233 full score |

| Video editor web app | 11.5 hours | 1,868 | 8,192 lines of working code |

Rust SysY compiler: a real-world benchmark

The SysY compiler task comes from Peking University's Compilers course. It requires implementing a complete compiler in Rust: lexical analyzer, parser, AST, Koopa IR code generation, RISC-V assembly backend, and performance optimizations. PKU undergraduates typically spend weeks on this project.

MiMo-V2.5-Pro completed it in 4.3 hours with 672 tool calls, scoring 233/233 on hidden tests. The model built the entire pipeline skeleton first, then tackled each layer systematically: Koopa IR (110/110), RISC-V backend (103/103), optimizations (20/20). The first compilation pass scored 137/233 (59%) — meaning the architecture was fundamentally correct before any testing began.

When a refactoring at round 512 caused a regression in two test points, the model diagnosed the issue, rolled back, and continued pushing forward. This structured self-correction discipline is exactly what long-horizon agent tasks reward.

- First pass: 137/233 (59% cold-start pass rate) — correct architecture from the start.

- Koopa IR: 110/110, RISC-V backend: 103/103, optimizations: 20/20.

- Self-corrected a regression at round 512 without human intervention.

- Reference baseline: PKU undergrads typically need weeks for the same project.

MiMo-V2.5: native multimodal agent with 1M context

MiMo-V2.5 is built for the agent era: it can simultaneously see (images), hear (audio), and read (text and video), then translate that understanding into actions. Agent capabilities on ClawEval exceed MiMo-V2-Pro, while API costs are roughly 50% lower.

Multimodal perception also surpasses the previous V2-Omni generation. On VideoMME, CharXiv, and MMMU-Pro, V2.5 approaches or matches top-tier closed-source models in cross-modal reasoning, video understanding, and chart analysis.

| Capability | MiMo-V2.5 | MiMo-V2-Omni |

|---|---|---|

| Agent capability (ClawEval) | Exceeds V2-Pro level | V2-Pro era baseline |

| API cost vs V2-Pro | ~50% lower | Same generation |

| Video understanding | Approaches top closed-source models | Previous generation |

| Chart analysis | Improved cross-modal reasoning | Previous generation |

| Context window | 1M tokens | 1M tokens |

| Native modalities | Image, audio, video | Text, image, audio, video |

Token efficiency: doing more with less

Both V2.5 models are optimized for token efficiency — achieving the same benchmark scores while consuming significantly fewer tokens. This directly impacts cost-per-task for agent workflows.

| Model | Compared to | Token savings |

|---|---|---|

| MiMo-V2.5-Pro | Kimi K2.6 | 42% fewer tokens |

| MiMo-V2.5 | Muse Spark | 50% fewer tokens |

V2.5-Pro vs V2.5: which should you use?

Xiaomi provides clear guidance: use V2.5-Pro for long, hard agent tasks (complex multi-step software engineering, extended workflows) and V2.5 for most general agent scenarios. V2.5 also supports native multimodal (image, audio, video) and has faster average inference speed for latency-sensitive tasks.

| Dimension | V2.5-Pro | V2.5 |

|---|---|---|

| Target | Long, hard agent tasks | General agent scenarios |

| Agent strength | Strongest Xiaomi model | Exceeds V2-Pro |

| Multimodal | Text only | Native image, audio, video |

| Inference speed | Standard | Faster average speed |

| Token Plan credits | 2x | 1x |

| Best for | Compiler-scale projects, multi-hour runs | Daily coding, multimodal tasks, cost-sensitive use |

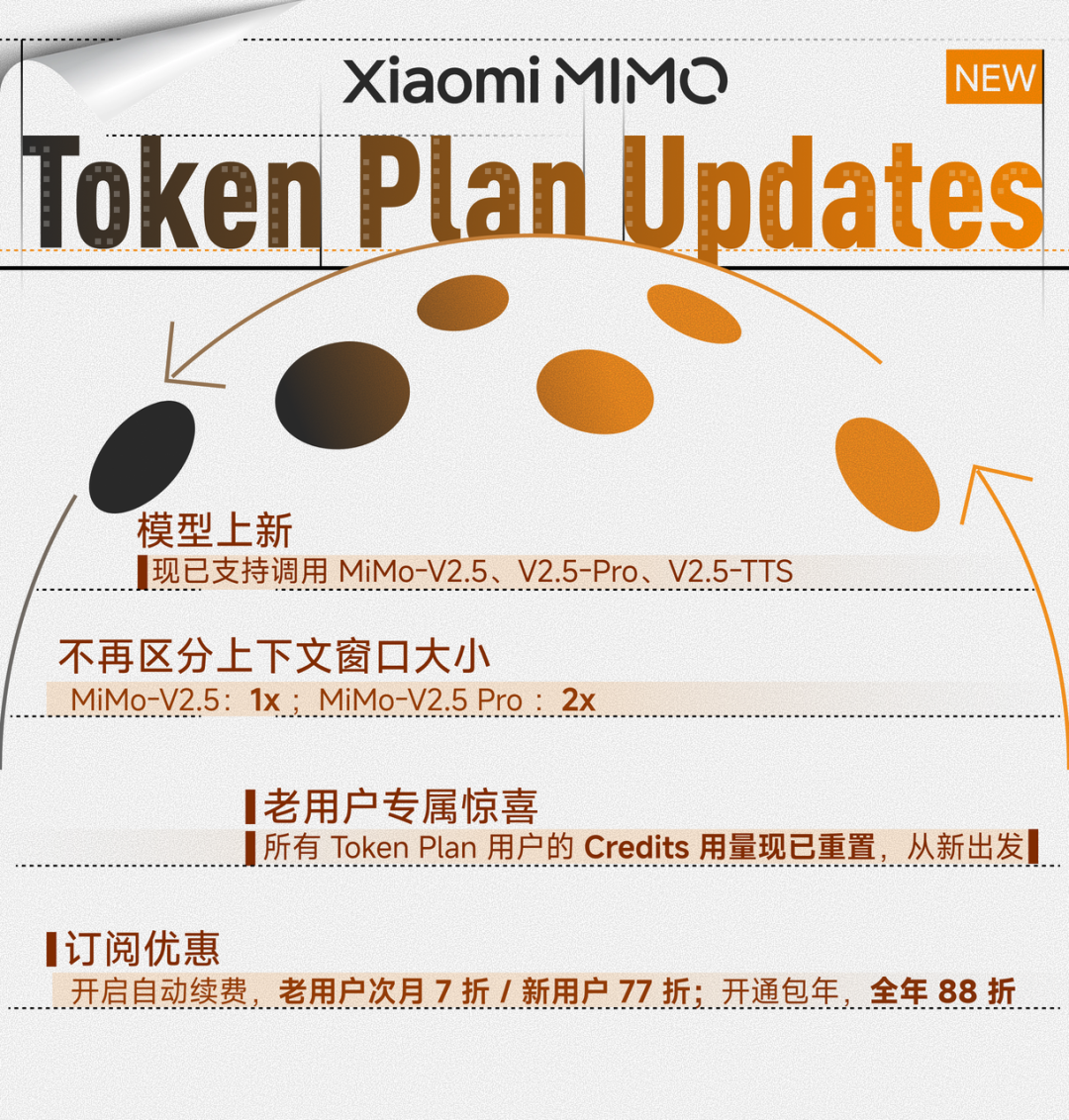

Token Plan overhaul: simpler, cheaper, more flexible

Xiaomi redesigned the Token Plan pricing alongside the V2.5 launch. The most significant change: Token Plan no longer differentiates between 256K and 1M context windows for credit consumption. One credit rate per model, regardless of context length.

New features include a night discount (80% of standard rates from 00:00 to 08:00 Beijing time), auto-renewal discounts (70% for existing users, 77% for new users — first renewal only), and an annual plan at 88% off list price.

- All existing Token Plan users received a full credits reset as a launch benefit.

- Credits reset does not affect subscription timing — existing plan durations remain unchanged.

- Token Plan subscription page: https://platform.xiaomimimo.com/#/token-plan

| Feature | Details |

|---|---|

| V2.5 credit rate | 1x (1 token = 1 credit) |

| V2.5-Pro credit rate | 2x (1 token = 2 credits) |

| Context differentiation | Removed — no more 256K vs 1M multiplier |

| Night discount | 80% of standard rate, 00:00–08:00 Beijing time |

| Auto-renewal (existing) | 70% off first renewal month |

| Auto-renewal (new) | 77% off first renewal month |

| Annual plan | 88% off, no stacking with other discounts |

API access and open-source roadmap

MiMo-V2.5 series models are available now through the Xiaomi MiMo platform at https://platform.xiaomimimo.com. The Token Plan subscription is at https://platform.xiaomimimo.com/#/token-plan. For interactive testing, Xiaomi MiMo Studio is available at https://aistudio.xiaomimimo.com/#/c.

Both MiMo-V2.5-Pro and MiMo-V2.5 are scheduled for global open-source release. Xiaomi has not yet announced a specific date.

- API: https://platform.xiaomimimo.com

- Token Plan: https://platform.xiaomimimo.com/#/token-plan

- MiMo Studio (interactive): https://aistudio.xiaomimimo.com/#/c

- V2.5-Pro and V2.5 open-source: coming soon (no date announced).

Try MiMo-V2.5 on the official platform

Sign up at the Xiaomi MiMo platform, choose Pay-As-You-Go or Token Plan, and integrate with your preferred coding tool. The Token Plan night discount and annual plan make V2.5 one of the most cost-effective agent models available.

Sources and official links

Frequently asked questions

What is the difference between MiMo-V2.5 and MiMo-V2.5-Pro?

V2.5-Pro is Xiaomi's strongest model, designed for long-horizon, high-difficulty agent tasks (e.g., building a full compiler in one session). V2.5 is a native multimodal agent that handles image, audio, and video input, covers most general agent scenarios, runs faster, and costs roughly half as much on the Token Plan (1x vs 2x credits).

How does MiMo-V2.5-Pro compare to Claude Opus 4.6 and GPT-5.4?

Xiaomi positions V2.5-Pro as competitive with Claude Opus 4.6 and GPT-5.4 on general agent capability and complex software engineering. The Rust SysY compiler benchmark (233/233 in 4.3 hours) is a concrete data point supporting this claim. Independent third-party benchmarks are still emerging.

What changed in the MiMo Token Plan?

The biggest change: no more 256K/1M context credit differentiation. V2.5 costs 1x credits, V2.5-Pro costs 2x credits. New features include night discount (80% rate from midnight to 8am Beijing time), auto-renewal discounts (70%/77% off), and annual plan at 88% off. All existing users also received a full credits reset.

Is MiMo-V2.5 open source?

Both MiMo-V2.5-Pro and MiMo-V2.5 are scheduled for global open-source release. Xiaomi has announced this plan but has not yet published a specific date.

How much token savings does MiMo-V2.5 offer?

V2.5-Pro saves 42% tokens compared to Kimi K2.6, and V2.5 saves 50% compared to Muse Spark, when achieving the same ClawEval agent benchmark scores. These savings translate directly to lower cost per task.