GLM-5 and GLM-5.1: Zhipu's Open-Source Flagship Models for Agentic Coding

GLM-5 launched on February 11, 2026 as Zhipu AI's open-source MoE flagship: 744B total parameters with 40B active, 28.5T training tokens, a 128K context window, and an MIT license. Less than two months later GLM-5.1 pushed further, reaching ~754B total / ~42B active parameters and demonstrating the ability to work autonomously for up to 8 hours on complex coding tasks. Together they form the current backbone of Zhipu's coding-agent ecosystem.

- GLM-5 is a 744B / 40B active MoE model trained on 28.5T tokens under the MIT license.

- GLM-5.1 can work autonomously for 8 hours and scores 58.4 on SWE-bench Pro, beating GPT-5.4.

- GLM-5V-Turbo adds multimodal vision+coding with a 200K context window and 128K max output.

Architecture and release timeline

GLM-5 was released on February 11, 2026 as Zhipu's largest open-source model to date. It uses a Mixture-of-Experts architecture with 744B total parameters and 40B active parameters per token, trained on 28.5 trillion tokens with a 128K context window. The model is released under the permissive MIT license, making it one of the most accessible frontier-scale open-source models available.

GLM-5.1 followed on April 8, 2026, scaling to approximately 754B total parameters and ~42B active parameters. The headline capability is sustained autonomous work: GLM-5.1 can operate independently on complex coding tasks for up to 8 hours. On SWE-bench Pro it scores 58.4, surpassing GPT-5.4 at 57.7.

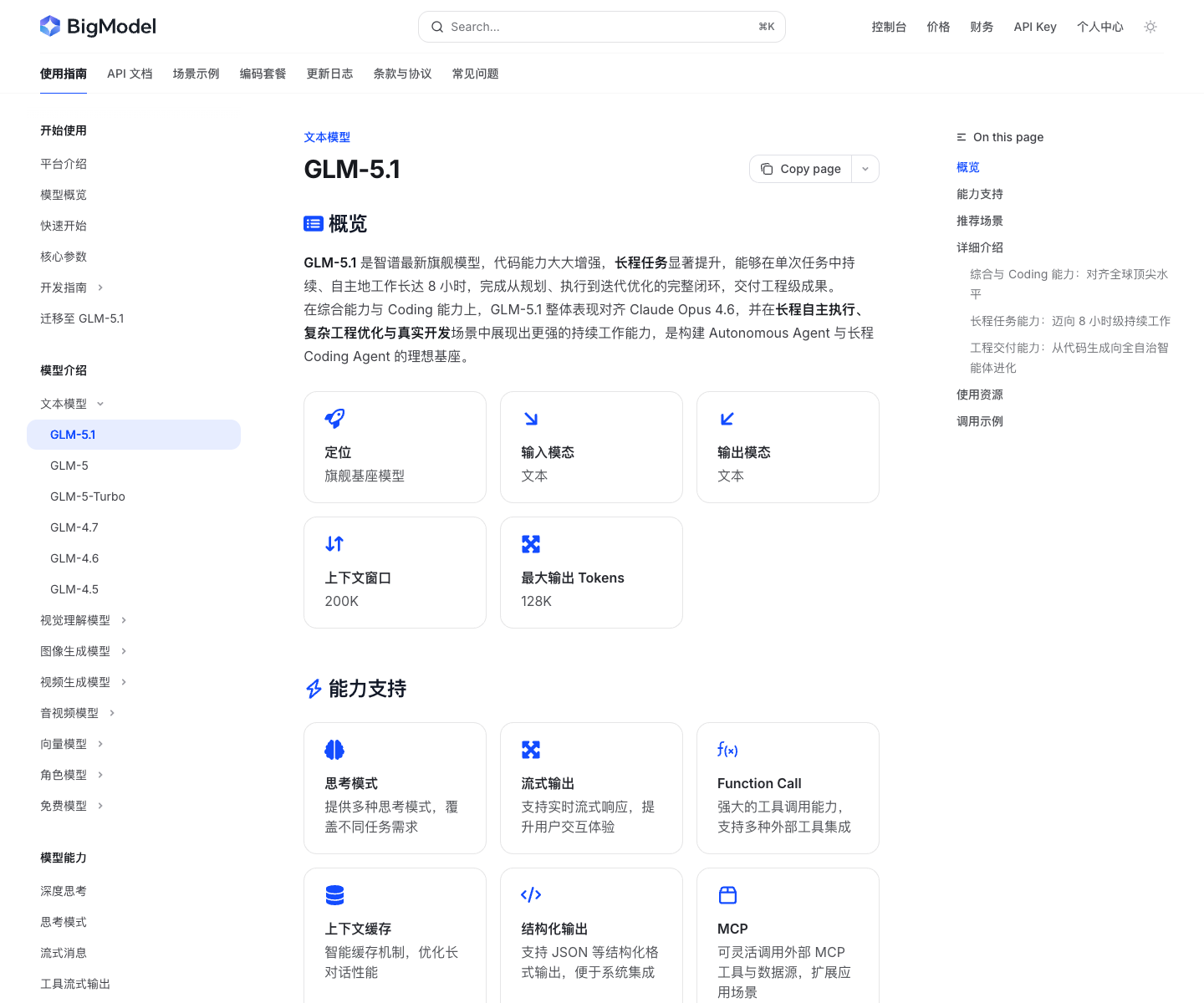

Official screenshot

The GLM-5.1 docs are the cleanest public page for current flagship specs and pricing

The current GLM docs surface model context, feature support, and live API pricing more clearly than most third-party summaries. It is the safest place to anchor GLM family claims before branching into DevPack.

- Useful for separating model docs from DevPack package messaging.

- Helps keep GLM-5.1 and the wider GLM family aligned with official wording.

Source: Official GLM-5.1 docs.

| Model | Total Params | Active Params | Context | Release Date |

|---|---|---|---|---|

| GLM-5 | 744B | 40B | 128K | Feb 11, 2026 |

| GLM-5.1 | ~754B | ~42B | 128K | Apr 8, 2026 |

| GLM-5V-Turbo | Multimodal | — | 200K | Apr 2, 2026 |

Benchmark performance

GLM-5 posts strong numbers across the main coding-agent benchmarks. On SWE-bench Verified it reaches 77.8, placing it competitively against Claude Opus 4.5 (80.9), GPT-5.2 (80.0), and MiniMax M2.5 (80.2). It does not lead every chart, but it consistently ranks in the top tier.

On agent-heavy benchmarks the picture is similarly competitive. Terminal-Bench 2.0 gives GLM-5 a score of 56.2 (60.7 on the extended evaluation), and BrowseComp with context comes in at 75.9, which is notably higher than Claude Opus 4.5 (67.8) and GPT-5.2 (65.8).

Public SWE-bench Verified scores for the current generation of coding-agent models.

Official Anthropic evaluation.

Official MiniMax M2.5 release.

Official OpenAI evaluation.

Official Z.AI evaluation.

Official Kimi K2.5 technical blog.

Source: Official Z.AI GLM-5 blog post.

Multi-step terminal and agent workflow benchmark comparing leading coding models.

Official Anthropic evaluation.

Official MiniMax M2.7 release.

Official Z.AI evaluation, standard / extended.

Official Kimi K2.5 technical blog.

Source: Official Z.AI GLM-5 blog post.

Web browsing and information retrieval benchmark with context-augmented evaluation.

Official Z.AI evaluation.

Official Kimi K2.5 technical blog.

Official Anthropic evaluation.

Official OpenAI evaluation.

Source: Official Z.AI GLM-5 blog post.

Supported tools and integrations

GLM-5 and GLM-5.1 are designed to slot into existing coding-agent toolchains. Zhipu has documented support for a range of popular tools, and the DevPack subscription route provides a single entry point for accessing GLM models across all of them.

| Tool | Compatibility | Notes |

|---|---|---|

| Claude Code | Anthropic-compatible | Use the Anthropic route with model override |

| OpenCode | OpenAI-compatible | Provider picker or manual config |

| Cline | OpenAI-compatible | Standard OpenAI base URL override |

| Droid | OpenAI-compatible | Supported through coding endpoint |

| OpenClaw | DevPack route | Full agent workflow support |

| Kilo Code | OpenAI-compatible | Supported through coding endpoint |

| Roo Code | OpenAI-compatible | Standard OpenAI base URL override |

API pricing

The public Z.AI pricing page already publishes USD token rows for the current GLM family, which makes it a cleaner buyer reference than older reposted CNY tables. The page also distinguishes direct API pricing from the separate DevPack package route.

- DevPack subscriptions are still the package route for tool-first buying, while the pricing page above is the direct API route.

- The official pricing page also keeps GLM-5.1, GLM-5, GLM-5-Turbo, and GLM-5V-Turbo on one family table.

- For buyer-facing writing, explain the route first: DevPack for package access, pricing page for PAYG API billing.

| Model | Input | Cached input | Cache write | Output |

|---|---|---|---|---|

| GLM-5.1 | $1.4 | $0.26 | Limited-time free | $4.4 |

| GLM-5 | $1.0 | $0.20 | Limited-time free | $3.2 |

| GLM-5V-Turbo | $1.2 | $0.24 | Limited-time free | $4.0 |

Start with DevPack to evaluate GLM-5.1 in your own workflow

The fastest way to test GLM-5.1 is through the DevPack subscription, which bundles API access with tool integration and avoids the need to configure endpoints manually.

Sources and official links

Frequently asked questions

What is the difference between GLM-5 and GLM-5.1?

GLM-5 is the base open-source model released Feb 11, 2026. GLM-5.1 is the improved version released Apr 8, 2026 with slightly more parameters (~754B total / ~42B active) and the ability to work autonomously for up to 8 hours. GLM-5.1 also scores higher on SWE-bench Pro at 58.4.

Is GLM-5 open source?

Yes. GLM-5 is released under the MIT license, making it one of the most permissively licensed frontier-scale models available. The weights are available on Hugging Face.

How does GLM-5 compare to Claude Opus 4.5 on SWE-bench?

GLM-5 scores 77.8 on SWE-bench Verified, while Claude Opus 4.5 scores 80.9. GLM-5 is competitive but does not lead on that specific benchmark. However, on BrowseComp with context, GLM-5 scores 75.9 vs Claude Opus 4.5 at 67.8, where GLM-5 does lead.