Kimi K2 and K2.5: Moonshot AI's Open-Source MoE Models (1T Parameters, 32B Active)

Kimi K2, released in July 2025, is Moonshot AI's open-source Mixture-of-Experts model with 1.04 trillion total parameters and 32 billion active per token. It uses 384 routed experts, MLA attention, and a 128K context window. Kimi K2.5, released in January 2026, extends the same backbone with multimodal capabilities via a MoonViT vision encoder, a 256K context window, and an Agent Swarm system for multi-agent coordination. Together they form one of the strongest open-source model families for coding, reasoning, and agentic workflows.

- K2 is a 1.04T/32B MoE model with 384 experts and MLA attention, released under the MIT license.

- K2.5 adds multimodal understanding, a 256K context window, and Agent Swarm multi-agent coordination.

- K2.5 reaches 76.8% on SWE-bench Verified and 85.0% on LiveCodeBench v6.

- Moonshot later extended the family with K2.6, which is now a separate open-source coding-and-agent release with its own guide.

Architecture: K2 and K2.5 share a MoE backbone

Kimi K2 is built on a 61-layer Mixture-of-Experts architecture with 384 routed experts and 1 shared expert. Each token activates 8 routed experts plus the shared expert, yielding 32 billion active parameters out of 1.04 trillion total. The model uses Multi-head Latent Attention (MLA), a 128K token context window, and was trained on 15.5 trillion tokens using the MuonClip optimizer.

Kimi K2.5 retains the same backbone and extends it with multimodal capabilities. It integrates a MoonViT vision encoder with approximately 400 million parameters and undergoes continual pretraining on 15 trillion mixed visual-and-text tokens. The context window expands to 256K tokens, and the model introduces two inference modes: Thinking (extended reasoning) and Instant (fast response).

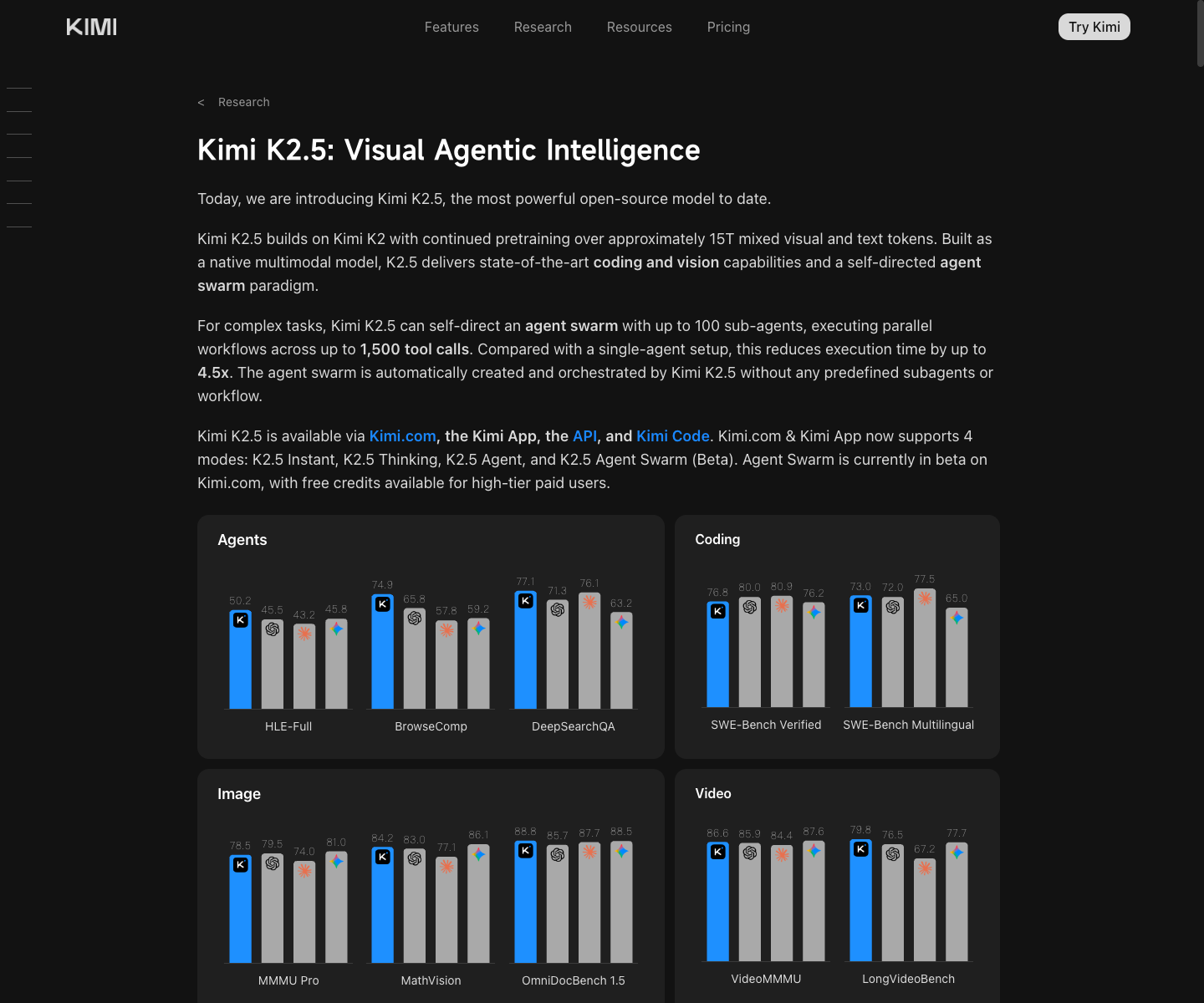

Official screenshot

Kimi K2.5 is best introduced from the technical blog, not from social summaries

The public K2.5 tech blog already combines the multimodal upgrade story, benchmark tables, and Agent Swarm explanation in one official page.

- Best official visual for the K2 to K2.5 family transition.

- Useful for readers who need proof that Agent Swarm and MoonViT are part of the public story.

Source: Official Kimi K2.5 tech blog.

Official screenshot

The K2.5 API price table lives on the Moonshot Open Platform side

This official pricing page is the cleanest source for cached input, input, and output pricing. The docs UI may default to Chinese depending on region, but the table is still the source-backed pricing reference.

- Best visual proof for readers asking about `kimi-k2.5` token cost.

- Pairs well with the Kimi Code page to show why membership pricing and API pricing should not be mixed.

Source: Official Kimi K2.5 pricing.

| Dimension | Kimi K2 | Kimi K2.5 |

|---|---|---|

| Release date | Jul 11, 2025 | Jan 27, 2026 |

| Total parameters | 1.04T | 1.04T (shared backbone) |

| Active parameters | 32B | 32B |

| Expert count | 384 routed + 1 shared | 384 routed + 1 shared |

| Experts per token | 8 routed + 1 shared | 8 routed + 1 shared |

| Layers | 61 | 61 |

| Attention type | MLA (Multi-head Latent Attention) | MLA |

| Context window | 128K tokens | 256K tokens |

| Multimodal | No | Yes (MoonViT ~400M vision encoder) |

| Training tokens | 15.5T text | 15T mixed visual + text (continual pretraining) |

| License | MIT | MIT |

| Optimizer | MuonClip | MuonClip |

| Key new features | — | Agent Swarm, Thinking + Instant modes |

Benchmarks: K2 and K2.5 competitive positioning

Kimi K2 delivers strong results for an open-source model, with 65.8% on SWE-bench Verified (agentic single attempt) and 89.5% on MMLU. K2.5 pushes significantly further, reaching 76.8% on SWE-bench Verified and 85.0% on LiveCodeBench v6, placing it competitively against closed-source leaders.

The most notable K2.5 results come from agent workflows. Its Agent Swarm system scores 78.4% on BrowseComp by coordinating multiple specialized agents. On mathematical reasoning, K2.5 achieves 96.1% on AIME 2025 and 87.6% on GPQA-Diamond.

| Benchmark | Kimi K2 | Kimi K2.5 | Notable competitors |

|---|---|---|---|

| SWE-bench Verified | 65.8% (single) / 71.6% (multiple) | 76.8% | MiniMax M2.5 80.2%, GLM5 77.8% |

| SWE-bench Pro | — | 50.7% | — |

| Terminal-Bench 2.0 | — | 50.8% | Qwen 3.6-Plus 61.6% |

| LiveCodeBench v6 | 53.7% | 85.0% | Qwen 3.6-Plus 87.1% |

| AIME 2024 | 69.6 | — | — |

| AIME 2025 | — | 96.1% | Step 3.5 Flash 97.3% |

| MMLU | 89.5% | — | — |

| GPQA-Diamond | 75.1% | 87.6% | — |

| Aider-Polyglot | 60.0% | — | — |

| BrowseComp (Agent Swarm) | — | 78.4% | — |

| MMMU-Pro | — | 78.5% | — |

K2.5 at 76.8% is competitive with other leading models on this widely cited coding benchmark.

Official MiniMax M2.5 release.

Official Qwen 3.6 release.

Shown in Qwen official comparison table.

Official Kimi K2.5 technical blog.

Official Kimi K2 release.

Source: Official Qwen 3.6 release.

K2.5 scores 85.0% on LiveCodeBench v6, placing it near the top of publicly reported results.

Official Qwen 3.6 release.

Official Kimi K2.5 technical blog.

Anthropic public benchmark.

Source: Official Kimi K2.5 technical blog.

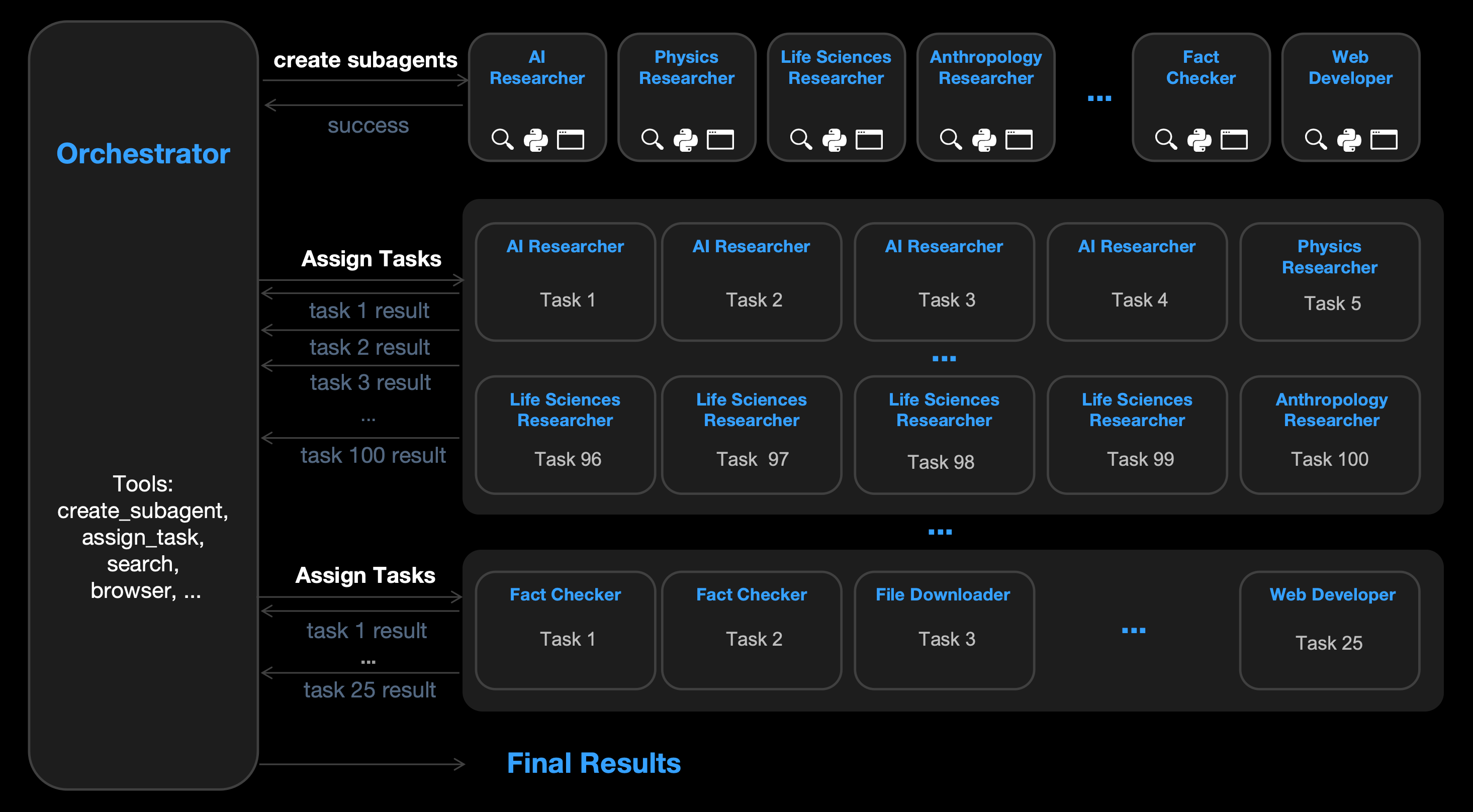

Agent Swarm: multi-agent coordination in K2.5

One of the most distinctive features of K2.5 is Agent Swarm, a multi-agent coordination system that dispatches specialized agents to handle different aspects of a complex task. On BrowseComp, Agent Swarm progressively improves from a single-agent baseline of 60.6% to 74.9% with two agents to 78.4% with the full swarm configuration.

This approach differs from single-agent tool-use by decomposing tasks across agents that can browse, reason, and verify independently before synthesizing a final answer. The result is a meaningful accuracy gain on information-retrieval benchmarks that require multi-step web navigation.

Official image

Moonshot visualizes K2.5 Agent Swarm as one orchestrator coordinating specialized sub-agents

This diagram comes directly from the official K2.5 technical blog and is one of the clearest public visuals for how Moonshot wants readers to understand the Agent Swarm architecture.

- Useful when explaining why K2.5 is positioned as a multi-agent workflow model rather than only a bigger checkpoint.

- Pairs naturally with the BrowseComp scaling table and the separate Open Platform pricing row.

Source: Official Kimi K2.5 tech blog.

| Configuration | BrowseComp score | Gain over baseline |

|---|---|---|

| Single agent | 60.6% | — |

| 2-agent swarm | 74.9% | +14.3pp |

| Full Agent Swarm | 78.4% | +17.8pp |

K2.6 and the later coding-agent roadmap

Moonshot AI followed K2.5 with Kimi K2.6 in April 2026, positioning it as a separate coding-and-agent release rather than just a silent checkpoint bump. The official K2.6 material focuses much more heavily on long-horizon coding, agent swarms, and tool workflows than the earlier K2 or K2.5 launch pages.

That makes K2.5 the architectural bridge between the original open-source K2 backbone and the later K2.6 workflow story. Readers who want the newest Kimi coding route, benchmarks, and API pricing should use the separate K2.6 guide instead of treating it as a footnote to K2.5.

- K2.6 is now publicly released, not just a beta teaser.

- The agentic coding roadmap follows the Agent Swarm infrastructure introduced in K2.5.

- Kimi Code (consumer coding product) and Moonshot Open Platform (API) remain separate routes.

Pricing, access, and integration routes

Kimi K2 weights are available on GitHub and Hugging Face under the MIT license, making them freely downloadable for local deployment or fine-tuning. K2.5 API access is available through the Moonshot Open Platform, with separate pricing from the consumer Kimi Code membership product.

The key distinction for buyers is that Kimi Code and Moonshot Open Platform are separate products with separate billing. Kimi Code is a membership-style coding product with its own client and subscription. The Open Platform provides API access for developers integrating K2.5 into custom workflows.

- K2 weights: open-source (MIT) on GitHub and Hugging Face.

- K2.5 API: available through the Moonshot Open Platform with the dedicated pricing row above.

- Kimi Code: consumer coding membership with its own client and subscription.

- Do not mix Kimi Code membership pricing with Open Platform API pricing in comparisons.

| Model route | Cached input | Input | Output | Notes |

|---|---|---|---|---|

| kimi-k2.5 | ¥0.70 / 1M tokens | ¥4.00 / 1M tokens | ¥21.00 / 1M tokens | Public row lists a 262,144-token context window. |

Start with K2 weights for open-source use, or K2.5 API for production workflows

The MIT-licensed K2 weights are the fastest path for local experimentation. The K2.5 API on Moonshot Open Platform is the fastest path for production deployments that need multimodal and agent capabilities.

Sources and official links

Frequently asked questions

What is the difference between Kimi K2 and K2.5?

K2 is a text-only 1.04T/32B MoE model with a 128K context window. K2.5 extends the same backbone with multimodal capabilities (MoonViT vision encoder), a 256K context window, Agent Swarm multi-agent coordination, and Thinking + Instant dual inference modes.

Is Kimi K2 open source?

Yes. Kimi K2 weights are released under the MIT license and are available on GitHub and Hugging Face for download, local deployment, and fine-tuning.

How does Agent Swarm improve K2.5 performance?

Agent Swarm dispatches multiple specialized agents to handle different aspects of a task. On BrowseComp, it improves accuracy from a single-agent baseline of 60.6% to 78.4% with the full swarm, a gain of 17.8 percentage points.