MiMo-V2-Pro: Xiaomi's Flagship Agent Model with 1M Context and Official Tool Routes

MiMo-V2-Pro, released on March 19, 2026, is Xiaomi's flagship agent-era model with over 1 trillion total parameters, 42 billion active parameters, a 7:1 Hybrid Attention design, and a 1-million-token context window. The cleanest official public story now comes from three Xiaomi pages used together: the release note for positioning, the pricing page for China-versus-overseas bands, and the tools overview for OpenClaw, Claude Code, Codex, Cline, Roo, Zed, and related integrations.

- Over 1T total / 42B active parameters with Hybrid Attention (7:1 ratio) and a 1M token context window.

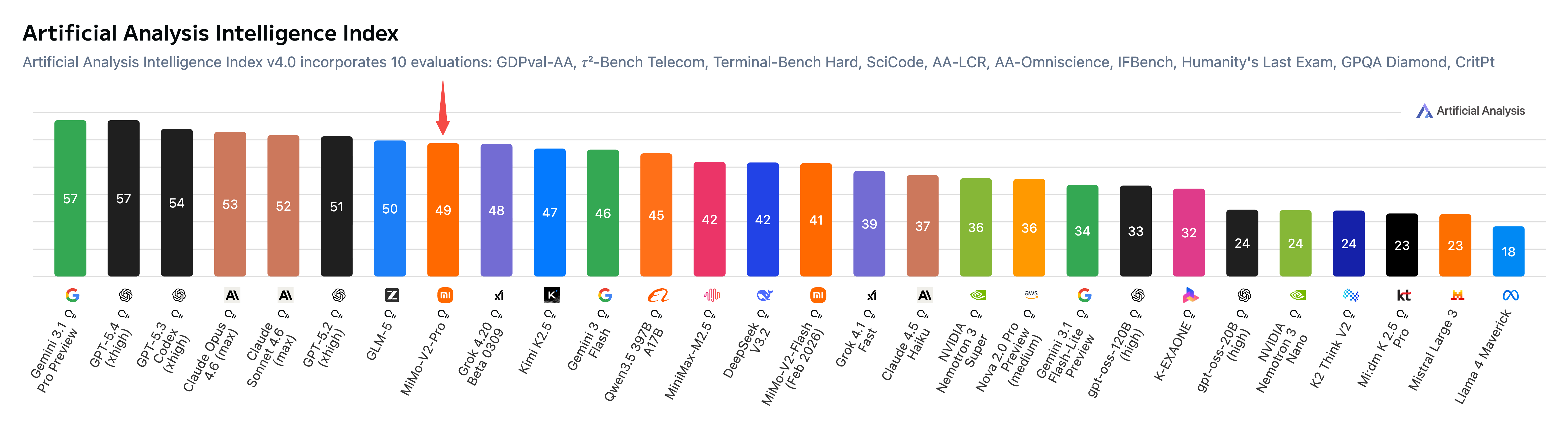

- Ranks 8th worldwide on the Artificial Analysis Intelligence Index, 2nd among Chinese LLMs.

- The official release note highlights OpenClaw and Claude Code as core real-world agent workflows.

- Integrates with OpenClaw, OpenCode, Claude Code, Kilo Code, Cline, Roo Code, Codex, and more.

Architecture: 1T parameters with 1M context

MiMo-V2-Pro is built on a large-scale Mixture-of-Experts architecture with over 1 trillion total parameters and 42 billion active per token. The model uses a Hybrid Attention mechanism with a 7:1 ratio (up from the previous generation's 5:1), which balances full attention for critical tokens with efficient sparse attention for long contexts.

The 1-million-token context window is one of the largest publicly available, enabling MiMo-V2-Pro to process extremely long documents, codebases, and multi-step agent traces in a single session. A lightweight Multi-Token Prediction (MTP) layer accelerates generation without sacrificing quality.

Official screenshot

MiMo-V2-Pro now has a strong official release page with buyer-relevant claims

The Xiaomi release note is the best page to anchor the 1T / 42B / 1M context story before you move into the pricing and integration docs.

- Useful for replacing older beta-era summaries with official product language.

- Pairs naturally with the pricing and tools overview pages for route clarity.

Source: Official MiMo-V2-Pro release note.

| Parameter | Value |

|---|---|

| Total parameters | >1T |

| Active parameters | 42B |

| Architecture type | Mixture-of-Experts (MoE) |

| Attention mechanism | Hybrid Attention (7:1 ratio) |

| Context window | 1M tokens |

| MTP layer | Lightweight, for fast generation |

| Release date | Mar 19, 2026 |

| Artificial Analysis rank | 8th worldwide, 2nd among Chinese LLMs |

Official performance and product signals

The public Xiaomi pages are strongest on product-facing signals rather than on one giant benchmark dump. The release note explicitly says MiMo-V2-Pro ranks 8th worldwide and 2nd among Chinese models on the Artificial Analysis board, and it frames the model as a strong real-world agent brain for OpenClaw and Claude Code workflows.

On the buyer-facing MiMo page, Xiaomi also calls out ClawEval performance and says the hands-on experience has surpassed Claude Sonnet 4.6 and is approaching Opus 4.6 while keeping API pricing much lower. That is a stronger source-backed way to position the model than recycling isolated third-party scorecards.

Official image

Xiaomi publishes the Artificial Analysis ranking image directly on the official MiMo-V2-Pro page

The buyer-facing MiMo page does not only describe the model in prose. It also exposes the ranking visual that Xiaomi uses to support the “8th worldwide, 2nd among Chinese LLMs” positioning.

- Useful when readers want a traceable official image instead of a copied leaderboard screenshot from social posts.

- Works well alongside the pricing page because it keeps performance proof and route proof on official Xiaomi surfaces.

Source: Official MiMo-V2-Pro page.

| Signal | What Xiaomi publishes | Why it matters |

|---|---|---|

| Artificial Analysis ranking | 8th worldwide, 2nd among Chinese LLMs | The clearest public leaderboard signal in Xiaomi's own release note. |

| ClawEval | 61.5, #3 globally | Useful because Xiaomi explicitly ties this to real OpenClaw workflows. |

| Real-world experience claim | Surpassed Claude Sonnet 4.6 and approaching Opus 4.6 | This is how Xiaomi positions MiMo-V2-Pro for buyer-facing productivity use. |

| Agent workflow focus | OpenClaw and Claude Code called out directly | Shows the official story is about deployed agents, not only chat or benchmark demos. |

API pricing and context tiers

MiMo-V2-Pro uses official route-specific pricing that now distinguishes China and overseas rows directly on Xiaomi's public pricing page. The same page also exposes cached-input prices and confirms that cache writing is temporarily free.

That makes MiMo one of the cleaner public examples of why region and route should be written explicitly instead of flattened into one converted sticker price.

- The same public page says cache writing is free for a limited time.

- The pricing page confirms 1M context and 128K max output on the current route.

| Route | Context band | Input | Cached input | Output |

|---|---|---|---|---|

| China | 0-256K | ¥7.00 / 1M tokens | ¥1.40 / 1M tokens | ¥21.00 / 1M tokens |

| China | 256K-1M | ¥14.00 / 1M tokens | ¥2.80 / 1M tokens | ¥42.00 / 1M tokens |

| Overseas | 0-256K | $1.00 / 1M tokens | $0.20 / 1M tokens | $3.00 / 1M tokens |

| Overseas | 256K-1M | $2.00 / 1M tokens | $0.40 / 1M tokens | $6.00 / 1M tokens |

Integrations and tool ecosystem

MiMo-V2-Pro supports a broad range of coding tools and agent frameworks. Official integrations include OpenClaw, OpenCode, Claude Code, Kilo Code, Cline, Roo Code, Codex, Cherry Studio, Zed, and Qwen Code. This makes MiMo-V2-Pro one of the best-connected Chinese LLMs for third-party tool compatibility.

The key setup consideration is that MiMo has two billing routes — Pay-As-You-Go (PAYG) and Token Plan — with different API endpoints. Mixing keys or endpoints between routes is the most common source of setup errors.

- Supported tools: OpenClaw, OpenCode, Claude Code, Kilo Code, Cline, Roo Code, Codex, Cherry Studio, Zed, Qwen Code.

- Two billing routes: PAYG and Token Plan. Do not mix keys or endpoints.

- PAYG and Token Plan endpoints are not interchangeable. Choose one route and stay consistent.

The broader MiMo-V2 family

MiMo-V2-Pro is the flagship model, but the MiMo-V2 family includes several other variants designed for different use cases and price points.

- MiMo-V2-Omni: an omni-modal agentic model that handles text, image, audio, and video inputs in a unified architecture.

- MiMo-V2-TTS: a text-to-speech model for voice synthesis. Currently free during the introductory period.

- MiMo-V2-Flash: a smaller, faster variant optimized for lower-latency use cases at reduced cost.

Start with the tools overview to pick the right route, then configure your preferred tool

The official tools overview page is the safest starting point. Pick PAYG or Token Plan first, then follow the setup guide for your specific coding tool.

Sources and official links

Frequently asked questions

What is the context window of MiMo-V2-Pro?

MiMo-V2-Pro supports up to 1 million tokens of context. The official pricing page splits rates by route and context band: China and overseas rows are published separately, with lower 0-256K rates and higher 256K-to-1M rates.

Which coding tools does MiMo-V2-Pro support?

MiMo-V2-Pro officially supports OpenClaw, OpenCode, Claude Code, Kilo Code, Cline, Roo Code, Codex, Cherry Studio, Zed, and Qwen Code. Check the official tools overview page for the most current list.

What is the most common setup mistake with MiMo?

Mixing PAYG and Token Plan keys or endpoints. The two billing routes use different API configurations. Pick one route and use only its corresponding keys and endpoints to avoid authentication errors.