StepFun Step 3.5 Flash: 196B-Parameter Agent-First MoE Model (350 TPS)

StepFun Step 3.5 Flash, released in February 2026, is an agent-first Mixture-of-Experts model with 196.81 billion total parameters and only 11 billion active per token. It uses 288 routed experts per layer with top-8 activation, Multi-Token Prediction (MTP-3) that predicts 4 tokens per forward pass, and Sliding Window Attention with a 3:1 ratio. The model achieves up to 350 tokens per second inference speed and scores 74.4% on SWE-bench Verified and 97.3% on AIME 2025. It is released under the Apache 2.0 license.

- 196.81B total / 11B active MoE with 288 routed experts per layer and top-8 activation.

- Multi-Token Prediction (MTP-3) predicts 4 tokens per forward pass for up to 350 TPS.

- Scores 74.4% on SWE-bench Verified, 97.3% on AIME 2025, and 86.4% on LiveCodeBench-V6.

- Apache 2.0 license with dramatically lower decoding cost than comparable models.

Architecture: agent-first MoE with Multi-Token Prediction

StepFun Step 3.5 Flash is designed around a 45-layer Mixture-of-Experts architecture with a hidden dimension of 4096. Each layer contains 288 routed experts plus 1 shared expert, with top-8 activation selecting only 11 billion of the 196.81 billion total parameters per token. This sparse activation is what enables the model to achieve high throughput without massive compute requirements.

A key innovation is Multi-Token Prediction (MTP-3), which predicts 4 tokens per forward pass instead of the standard 1. Combined with Sliding Window Attention at a 3:1 ratio and a vocabulary of 128,896 tokens, the model reaches inference speeds of up to 350 tokens per second. The 256K context window supports long-form agent interactions and complex coding sessions.

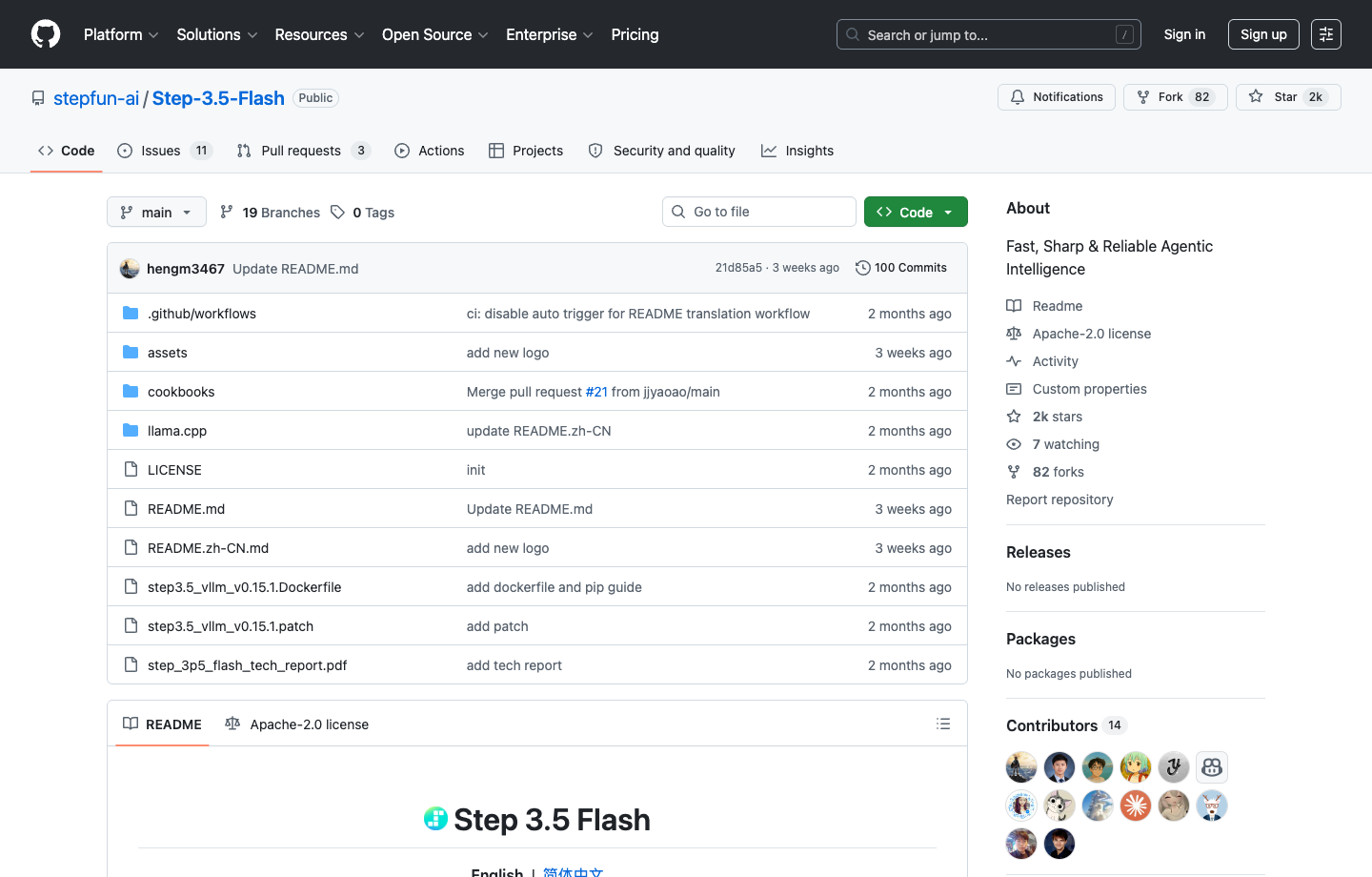

Official screenshot

Step 3.5 Flash exposes architecture and benchmark rows directly on the official repo page

For public writing, the GitHub page is one of the best official assets because it makes the MoE architecture, benchmark table, and open-weight route visible without guesswork.

- Strong image for explaining why Step 3.5 Flash is framed around speed and decoding efficiency.

- Useful when readers want to verify that the model is an Apache 2.0 open release.

| Parameter | Value |

|---|---|

| Total parameters | 196.81B |

| Active parameters | 11B |

| Architecture type | Mixture-of-Experts (MoE) |

| Routed experts per layer | 288 |

| Shared experts per layer | 1 |

| Experts activated per token | 8 (top-8) + 1 shared |

| Layers | 45 |

| Hidden dimension | 4096 |

| Context window | 256K tokens |

| Vocabulary size | 128,896 |

| Multi-Token Prediction | MTP-3 (4 tokens per forward pass) |

| Attention mechanism | Sliding Window Attention (3:1 ratio) |

| Max inference speed | Up to 350 TPS |

| License | Apache 2.0 |

Benchmarks: competitive at a fraction of the cost

Step 3.5 Flash delivers benchmark results that compete with much larger models. It scores 74.4% on SWE-bench Verified, 51.0% on Terminal-Bench 2.0, and 97.3% on AIME 2025 — the latter placing it among the top publicly reported scores on that benchmark. On LiveCodeBench-V6 it reaches 86.4%, and on GAIA (no file) it scores 84.5.

The BrowseComp score is 51.6 without context and 69.0 with context, showing that the model benefits significantly from augmented retrieval. The tau2-Bench score of 88.2 indicates strong tool-use and agent capabilities.

| Benchmark | Step 3.5 Flash | DeepSeek V3.2 | Kimi K2.5 | GLM-4.7 | MiniMax M2.1 | MiMo-V2 Flash |

|---|---|---|---|---|---|---|

| SWE-bench Verified | 74.4% | — | 76.8% | — | — | — |

| Terminal-Bench 2.0 | 51.0% | — | 50.8% | — | — | — |

| tau2-Bench | 88.2 | — | — | — | — | — |

| BrowseComp | 51.6 (69.0 w/ ctx) | — | — | — | — | — |

| AIME 2025 | 97.3% | — | 96.1% | — | — | — |

| LiveCodeBench-V6 | 86.4% | — | 85.0% | — | — | — |

| GAIA (no file) | 84.5 | — | — | — | — | — |

Decoding cost advantage

One of the most compelling aspects of Step 3.5 Flash is its decoding efficiency. Because only 11 billion of 196.81 billion parameters are active per token, and Multi-Token Prediction generates 4 tokens per forward pass, the model achieves a dramatically lower decoding cost compared to models with similar capability.

Compared to DeepSeek V3.2, Step 3.5 Flash is approximately 6 times cheaper to decode. Compared to Kimi K2, it is approximately 18.9 times cheaper. This cost advantage makes it particularly attractive for high-volume production deployments where inference cost is a primary concern.

| Model | Relative decoding cost |

|---|---|

| Step 3.5 Flash | 1.0x (baseline) |

| DeepSeek V3.2 | 6.0x |

| Kimi K2 | 18.9x |

Access routes: PAYG API pricing and Step Plan subscriptions

StepFun now publishes two distinct official buying routes for Step 3.5 Flash. The direct API route appears on the public pricing page, where `step-3.5-flash` is listed at 0.7 CNY per 1M uncached input tokens, 0.14 CNY cached input tokens, and 2.1 CNY output tokens. That is the cleanest public token-billing row for programmatic access.

The Step Plan route is different. The official Step Plan overview sells Step 3.5 Flash through subscription bundles for tool-first usage, with Flash Mini at ¥49/month, Flash Plus at ¥99/month, Flash Pro at ¥199/month, and Flash Max at ¥699/month. The same page also documents a dedicated Step Plan base URL and positions the plan around OpenClaw, Claude Code, Trae, Cursor, and related tools rather than raw PAYG token billing.

- Step Plan uses a dedicated base URL: `https://api.stepfun.com/step_plan/v1`.

- The Step Plan overview currently lists `step-3.5-flash-2603`, `step-3.5-flash`, and `stepaudio-2.5-tts` in the supported-model lineup.

- The StepFun pricing page also lists StepAudio 2.5 TTS at ¥5.8 per 10,000 characters, which helps show the broader product surface without mixing TTS pricing into the Step 3.5 Flash token row.

- For buyer-facing writing, keep the API PAYG route and Step Plan subscription route separate. They are not the same billing surface.

| Route | Public pricing or plan | Best fit | Notes |

|---|---|---|---|

| Direct API | ¥0.7 input / ¥0.14 cached input / ¥2.1 output per 1M tokens | Builders who want PAYG API billing | Published on the StepFun pricing details page under `step-3.5-flash`. |

| Step Plan Flash Mini | ¥49 / month | Light tool-first usage | Subscription route with 5-hour and weekly prompt quotas. |

| Step Plan Flash Plus | ¥99 / month | Frequent coding and agent work | Same Step Plan route, higher quota tier. |

| Step Plan Flash Pro | ¥199 / month | Heavy individual use | Same Step Plan route, larger quota tier. |

| Step Plan Flash Max | ¥699 / month | Team or production-style usage | Highest public Step Plan tier. |

Use Step 3.5 Flash when inference cost and speed matter as much as accuracy

The Apache 2.0 weights are the fastest path for experimentation. The API is the fastest path for production deployments that need high-throughput agentic workflows at low cost.

Sources and official links

Frequently asked questions

What makes Step 3.5 Flash different from other MoE models?

Step 3.5 Flash has an unusually low active-parameter ratio (11B out of 196.81B) and uses Multi-Token Prediction (MTP-3) to generate 4 tokens per forward pass. This combination yields up to 350 TPS and dramatically lower decoding costs compared to peers.

Is Step 3.5 Flash open source?

Yes. Step 3.5 Flash is released under the Apache 2.0 license. Weights are available on GitHub and Hugging Face.

How does Step 3.5 Flash compare on coding benchmarks?

It scores 74.4% on SWE-bench Verified and 51.0% on Terminal-Bench 2.0. While slightly below the top scorers on SWE-bench (e.g., K2.5 at 76.8%), it delivers competitive results at a fraction of the inference cost.